"""

To try the examples in the browser:

1. Type code in the input cell and press

Shift + Enter to execute

2. Or copy paste the code, and click on

the "Run" button in the toolbar

"""

# The standard way to import NumPy:

import numpy as np

# Create a 2-D array, set every second element in

# some rows and find max per row:

x = np.arange(15, dtype=np.int64).reshape(3, 5)

x[1:, ::2] = -99

x

# array([[ 0, 1, 2, 3, 4],

# [-99, 6, -99, 8, -99],

# [-99, 11, -99, 13, -99]])

x.max(axis=1)

# array([ 4, 8, 13])

# Generate normally distributed random numbers:

rng = np.random.default_rng()

samples = rng.normal(size=2500)

samplesNearly every scientist working in Python draws on the power of NumPy.

NumPy brings the computational power of languages like C and Fortran to Python, a language much easier to learn and use. With this power comes simplicity: a solution in NumPy is often clear and elegant.

NumPy's API is the starting point when libraries are written to exploit innovative hardware, create specialized array types, or add capabilities beyond what NumPy provides.

| Array Library | Capabilities & Application areas | |

| Dask | Distributed arrays and advanced parallelism for analytics, enabling performance at scale. |

| CuPy | NumPy-compatible array library for GPU-accelerated computing with Python. |

| JAX | Composable transformations of NumPy programs: differentiate, vectorize, just-in-time compilation to GPU/TPU. |

| Xarray | Labeled, indexed multi-dimensional arrays for advanced analytics and visualization. |

| Sparse | NumPy-compatible sparse array library that integrates with Dask and SciPy's sparse linear algebra. |

| PyTorch | Deep learning framework that accelerates the path from research prototyping to production deployment. |

| TensorFlow | An end-to-end platform for machine learning to easily build and deploy ML powered applications. |

| MXNet | Deep learning framework suited for flexible research prototyping and production. |

| Arrow | A cross-language development platform for columnar in-memory data and analytics. |

| xtensor | Multi-dimensional arrays with broadcasting and lazy computing for numerical analysis. |

| Awkward Array | Manipulate JSON-like data with NumPy-like idioms. |

| uarray | Python backend system that decouples API from implementation; unumpy provides a NumPy API. |

| tensorly | Tensor learning, algebra and backends to seamlessly use NumPy, MXNet, PyTorch, TensorFlow or CuPy. |

NumPy lies at the core of a rich ecosystem of data science libraries. A typical exploratory data science workflow might look like:

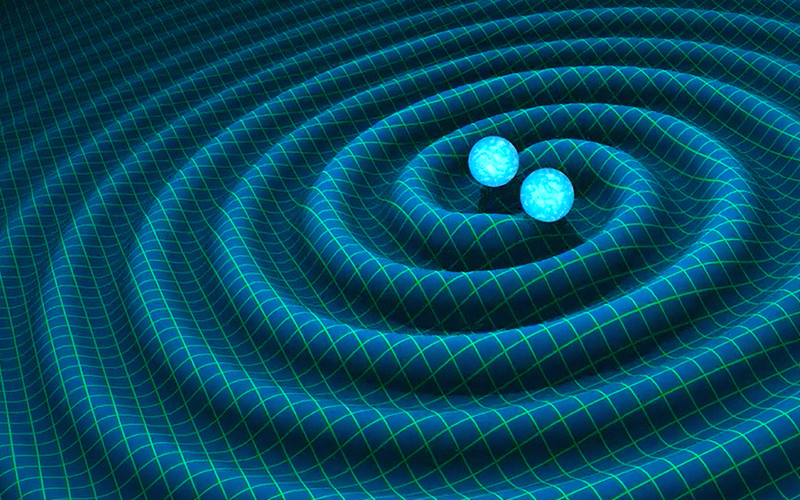

NumPy forms the basis of powerful machine learning libraries like scikit-learn and SciPy. As machine learning grows, so does the list of libraries built on NumPy. TensorFlow’s deep learning capabilities have broad applications — among them speech and image recognition, text-based applications, time-series analysis, and video detection. PyTorch, another deep learning library, is popular among researchers in computer vision and natural language processing. MXNet is another AI package, providing blueprints and templates for deep learning.

NumPy is an essential component in the burgeoning Python visualization landscape, which includes Matplotlib, Seaborn, Plotly, Altair, Bokeh, Holoviz, Vispy, Napari, and PyVista, to name a few.

NumPy’s accelerated processing of large arrays allows researchers to visualize datasets far larger than native Python could handle.